Artificial intelligence is changing everything from banking to healthcare, but when AI gets it wrong in cybersecurity, the risks can be devastating.

AI mistakes in security don't just skew analytics—they create blind spots, undermine trust, and can leave organisations dangerously exposed to threats.

In this guide, we’ll break down the problem, highlight real-world examples, share expert advice, and equip you with best practices for catching and correcting AI flaws—because in this AI-powered age, your cyber resilience depends on getting AI right.

Why AI Mistakes Matter More Than Ever

Today, businesses and security teams depend on AI for:

But here’s the kicker: poorly trained or biased algorithms can be weaponised by attackers, ultimately making your defence systems part of the problem. AI doesn’t just make “honest mistakes”—it can reinforce gaps, miss new cyberattack techniques and, in worst cases, turn small oversights into massive breaches.

Key Stats & Industry Insights

Classic Cases: When AI Gets It Wrong

Case Study 1: Phantom Threat Detection

A large retailer’s AI-powered security flagged legitimate user behaviour as an insider attack, causing widespread account lockouts and business disruption. Engineers discovered the AI “overfit” on outdated attack patterns and failed to adapt to new workflows.

Case Study 2: AI-Enabled Model Poisoning

In a trading firm, tampered AI algorithms misclassified stock options due to a misclassification attack, leading to a $400 million error. Lack of production monitoring and unchecked model drift were the culprits.

Case Study 3: Bypassed by Adversaries

Darktrace and Google’s teams showed that attackers can use techniques like data poisoning, adversarial prompts, and exploitation of AI “blind spots” to sneak malware past even leading AI-based defences.

Why Does AI Get It Wrong in Cybersecurity?

Benefits of Getting AI Right in Security

Step-By-Step Guide: How to Minimise AI Failure in Security

Step 1. Start With Quality Data

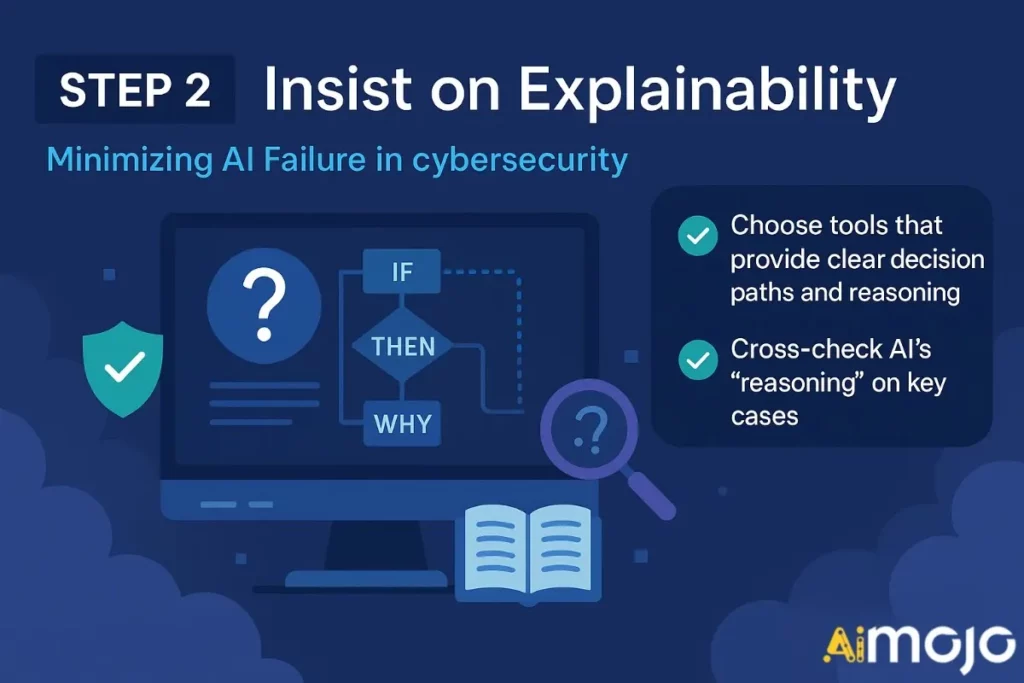

Step 2. Insist on Explainability

Step 3. Regular Human Oversight

Maintain “red teams” and security analysts to review AI decisions, especially on flagged or ignored alerts

Step 4. Monitor For Drift and Poisoning

Implement tools that track AI model performance and detect manipulation attempts

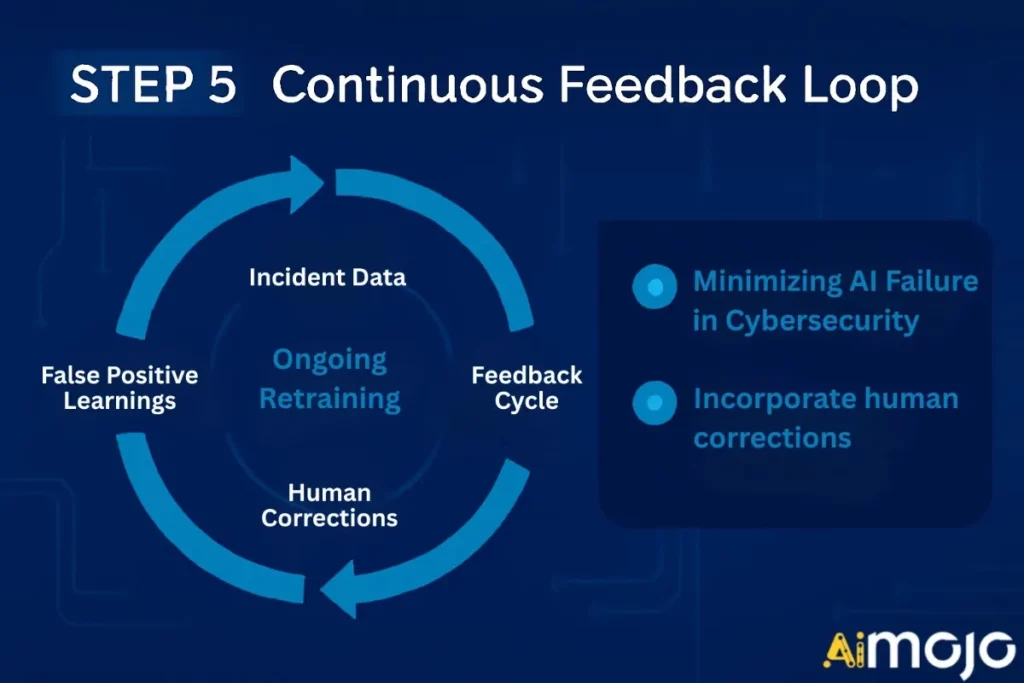

Step 5. Continuous Feedback Loop

Incorporate human corrections, incident data, and false/positive learnings into ongoing retraining

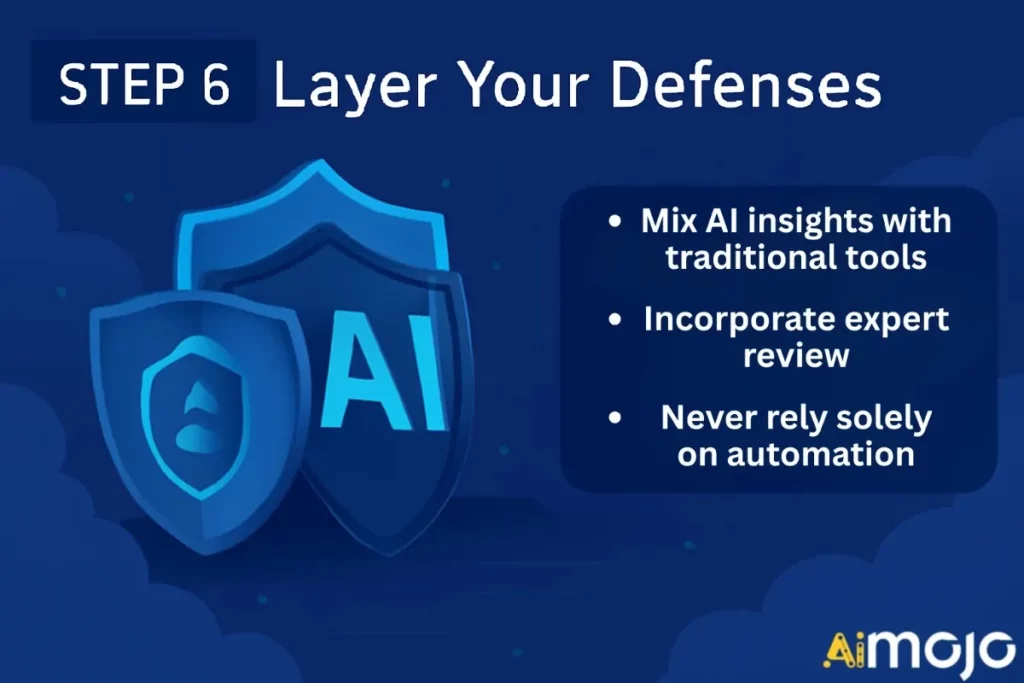

Step 6. Layer Your Defence

Mix AI insights with traditional tools and expert review—never rely solely on automation

Top Tools & Resources for AI Error Detection in Cybersecurity

| Tool/Resource | Primary Function | Unique Strength |

|---|---|---|

| Darktrace | Threat detection | Autonomous self-learning, deep learning |

| IBM Watson for Cybersecurity | Threat analysis & intelligence | Advanced NLP, large-scale data synthesis |

| CrowdStrike Falcon | Endpoint security | Real-time malware prevention |

| Microsoft Security Copilot | Security automation & investigation | Context-driven, AI-powered insights |

| PentestGPT | Automated penetration testing | AI-driven recommendations, reporting |

💡 Pro Tips to Keep Your AI Defence Sharp

Frequently Asked Questions

Can AI errors be completely avoided in cybersecurity?

Not entirely—AI will make mistakes, but regular oversight, diverse input data, and robust monitoring can drastically reduce risk.

Should we rely solely on AI for our security?

No. AI is a powerful ally, but works best alongside human expertise and traditional security controls.

How fast are attackers adopting AI?

Very quickly. From generative malware to deepfakes, cybercriminals are outpacing defenders, making AI vigilance a must.

Conclusion

When AI gets it wrong, the consequences ripple across your entire organisation—from missed breaches to loss of customer trust. Highly-trained, explainable AI, always paired with human oversight, is the real game changer in cybersecurity.

Want to bulletproof your business?

Start by evaluating your security AI today. Audit for blind spots, push for explainability, and don’t let automation lull you into complacency. If you’re ready to take control, act now—because getting AI right is not just smart, it’s essential.

Boost your cyber resilience by upskilling your team, demanding transparent AI solutions, and joining the conversation. The future belongs to those who trust—but also verify—their AI.

BONUS: Get our $200 “AI Mastery Toolkit” FREE when you sign up!

BONUS: Get our $200 “AI Mastery Toolkit” FREE when you sign up!

![10 Best Janitor AI Alternatives with NSFW Chat [May 2026]](https://aimojo.io/wp-content/uploads/2024/01/Best-Janitor-AI-Alternatives-100x100.webp)