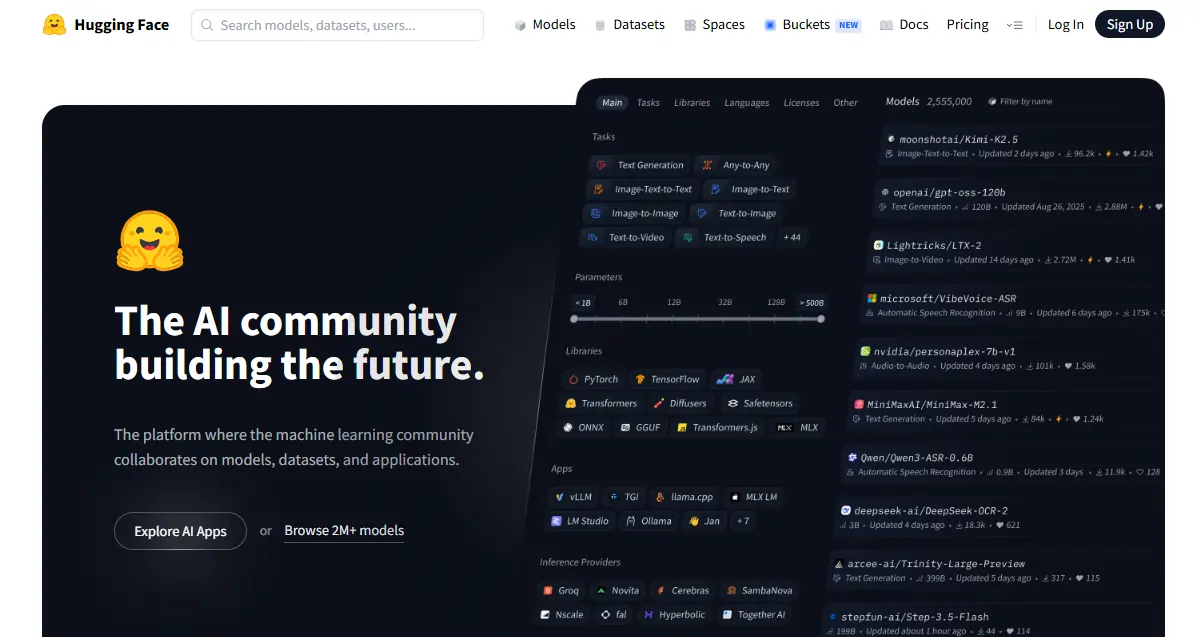

Most people land on 포옹하는 얼굴, stare at a wall of model names, and click away within 30 seconds. Big mistake.

While everyone argues about which AI tool is worth paying for, tens of thousands of builders are quietly using Hugging Face to run, fine-tune, and 발송 AI-powered apps — completely free. It's not just a model library. It's the platform where Google, Meta, Mistral, and solo developers all work in the same space.

이상 1 million models, 500K+ datasets, and free app hosting — under one account. Here's the complete breakdown of what it is and how to actually use it.

What Hugging Face Actually Is (Most People Get This Wrong)

"GitHub of Machine Learning” label gets thrown around a lot. It holds in one direction — public repos, version control, community contributions. But it falls apart fast. Hugging Face also runs live inference, hosts AI-powered apps, and provides full training infrastructure. GitHub does none of that.

The company itself started as an NLP chatbot startup, pivoted into open-source AI tooling, and never looked back. The public platform is free and community-driven; the enterprise products are how they make money. For beginners, the free tier covers everything you need. Models get published here 전에 they make headlines — if something new drops in AI, it shows up on Hugging Face first.

The Three Pillars — Know These Before Anything Else

Everything on Hugging Face sits inside three core sections:

| 기둥 | 그것이 무엇인가? | 업데이트가 중요한 이유 |

|---|---|---|

| 모델 | 1M+ pre-trained AI 모델 | Skip training from scratch entirely |

| 데이터 세트 | Raw data for training & testing | Standardized, ready-to-load data |

| 공간 | Free hosted AI 앱 | Test models without touching deployment code |

Get comfortable with all three — they connect constantly as you build.

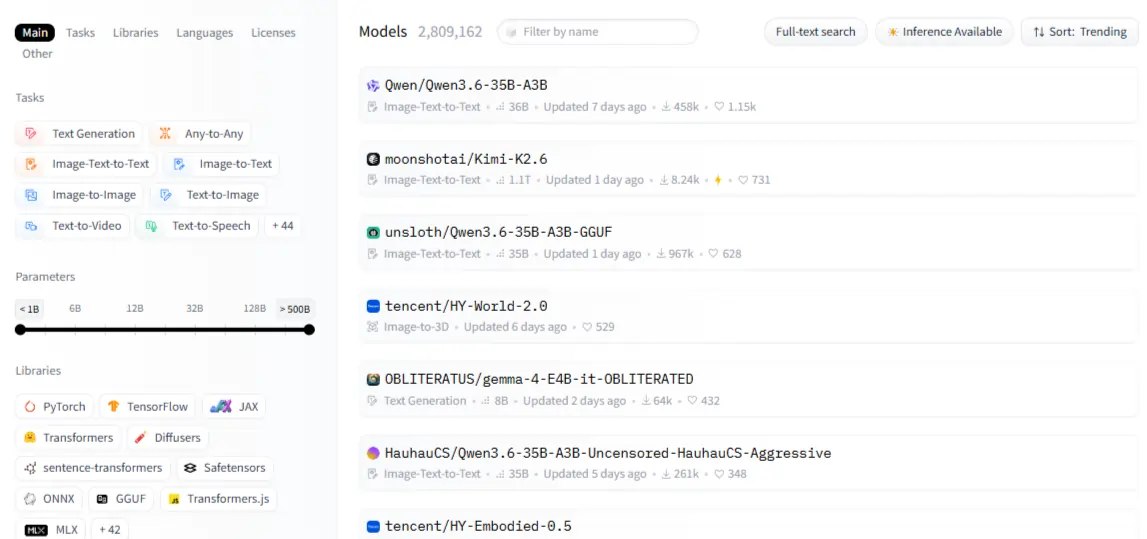

The Model Hub — Where You'll Spend Most of Your Time

The filter panel is your best friend here: task type, framework (PyTorch, TensorFlow, JAX), language, license, and model size. Sort by 대부분 다운로드 for battle-tested picks; sort by 최근에 업데이트 when you need fresh options.

Every model has a card — read it. The intended use section tells you what the model was built for; the 제한 사항 섹션 tells you where it breaks. That second part is more valuable than any benchmark score. Model categories span NLP (text classification, summarization, translation, question answering), vision (image classification, object detection, generation), audio (ASR, TTS), and 멀티모달 작업 like visual question answering.

One thing beginners miss: not all models are freely downloadable. Gated models like 메타's 야마 require approval before access. Once approved, you authenticate with an access token. Always check the license before building — some models ban commercial use entirely.

The Transformers Library — The Code Running Half the AI 세계

The transformers library is a 통일 Python 꾸러미 that standardizes how you load and run any model on the hub across PyTorch, TensorFlow, and JAX with the same API.

The pipeline() function is where most beginners should start — it wraps tokenization, model loading, and post-processing into a single call. 감정 분석, text generation, image classification — all follow the exact same pattern. The moment you need fine-grained control over outputs, drop down to writing custom inference code. Until then, pipelines handle everything.

Don't skip tokenization. Raw text can't go directly into a model. AutoTokenizer handles the conversion and always matches the right tokenizer to the right checkpoint automatically. Mismatched tokenizers cause the most confusing errors beginners run into — and they're 100% avoidable.

| 태스크 | Pipeline Name | 예시 모델 |

|---|---|---|

| 감정 분석 | text-classification | ditilbert-base-uncased |

| 텍스트 생성 | text-generation | 미스트랄-7B |

| 요약 | summarization | facebook/bart-large-cnn |

| 음성 인식 | automatic-speech-recognition | openai/whisper-base |

| 이미지 분류 | image-classification | google/vit-base-patch16 |

Datasets and Spaces — The Two Features Nobody Uses Enough

The datasets library loads data in Apache Arrow format — fast, memory-efficient, and built to handle datasets that don't fit in RAM. load_dataset("name", split="train") is all it takes to get started. Before you commit to any dataset for a training run, use 데이터 스튜디오 in the browser to preview and filter it without writing a single line of code.

Spaces is where AI demos go live for free. Your app gets a shareable URL in minutes with zero DevOps work. The free CPU tier handles lightweight demos; paid GPU-backed Spaces handle heavier models.

그라 디오 for fast model demos with minimal code; use 스트림릿 when your app needs a more data-heavy dashboard layout. Cloning a trending Space is the fastest way to start — pick one in your category, fork it, and customize.

Setting Up Your Account the Right Way

Free tier covers model browsing, CPU Spaces, rate-limited API calls, and full community access. Pro adds priority GPU Spaces, expanded inference, and private repos. For most beginners, free is enough.

Generate an access token under settings → Access Tokens. Read tokens work for downloading; write tokens are needed for pushing models or datasets. Authenticate in Python with huggingface_hub.login(). For your install:

세게 때리다

pip install transformers datasets huggingface_hub추가 accelerate, peft글렌데일 trl if fine-tuning is on the roadmap. Google Colab is the fastest environment for absolute beginners — free GPU, nothing to configure locally.

Running Your First Model, Then Making It Yours

For sentiment analysis: 전화 pipeline("text-classification"), pass a string, read the label score back. For text generation: use max_new_tokens, temperature글렌데일 do_sample to control how creative vs. consistent the output is. The same pipeline() pattern works for translation, speech recognition, and image classification — the API doesn't change, only the task name does.

When things break:

Once the basics click, fine-tuning is the next move. Pre-trained models are general; fine-tuned models are precise. Fine-tuning beats prompting when you're working with domain-specific data, need consistent behavior, or want to cut inference costs by running a smaller specialized model.

PEFT freezes most of the model and only trains lightweight adapters — no $10K GPU required. QLoRA takes it further with quantization, making 7B parameter model fine-tuning possible on a single consumer GPU.

The Trainer API manages the entire loop — batching, evaluation, checkpointing — and pushing back to the hub takes one line when you're done.

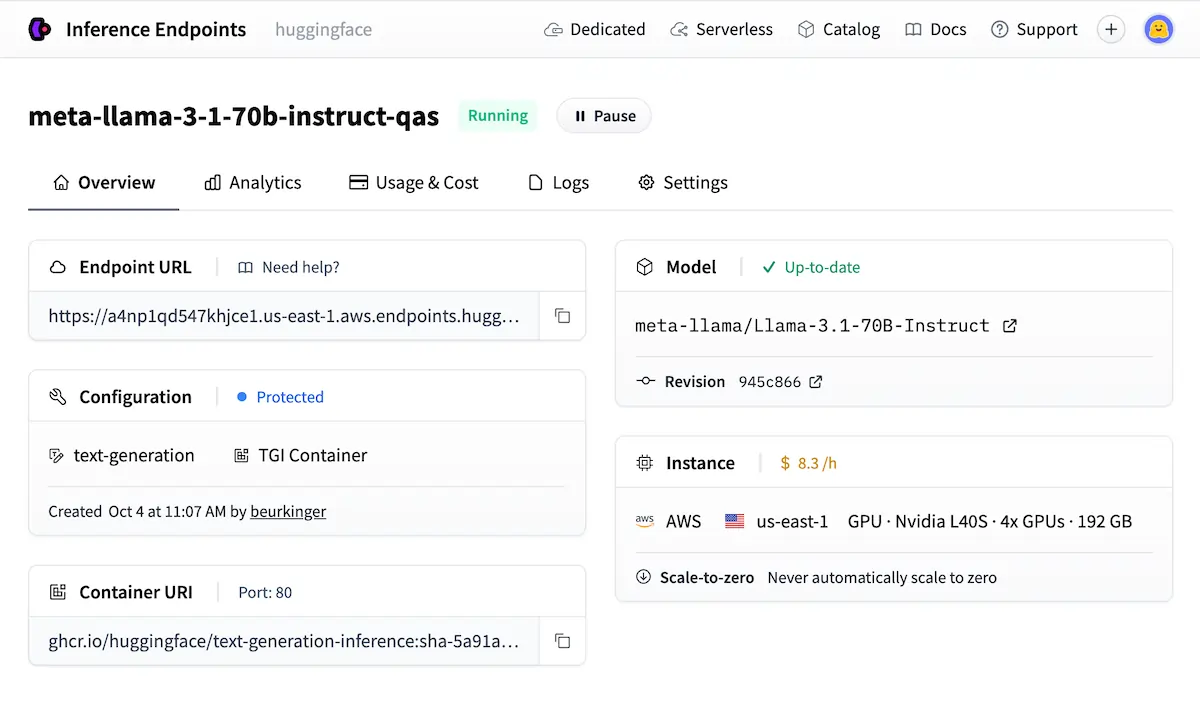

Inference Without Your Own Server

The hosted Inference API gives you a REST endpoint for any public model instantly. The free tier is rate-limited — fine for testing, not for production. For real applications, 추론 끝점 provide a dedicated, private API that auto-scales to zero when idle, keeping costs manageable for variable traffic.

When data privacy or latency is non-negotiable, self-hosting with TGI (Text Generation Inference) or vLLM is the production-ready path.

The Community, the Leaderboards, and Why It Beats Everything Else

The LLM 리더보드 열기 ranks models by benchmark — useful for shortlisting, but always validate on your actual use case before trusting scores. Organization accounts let teams manage shared model collections with controlled access; Meta AI, Google, and EleutherAI all run org accounts directly on the hub.

Following researchers and orgs gives you a real-time feed of new model releases without needing to monitor social media.

| 플랫폼 | 오픈 소스 | 다양한 모델 | 프리 티어 | Fine-Tuning Tools |

|---|---|---|---|---|

| 포옹하는 얼굴 | ✅ 전체 | ✅ 1천만 이상 | ✅ 관대한 | ✅ Full stack |

| 텐서플로우 허브 | 예 | 🔶 제한됨 | 예 | ❌ 기본 |

| Google 모델 가든 | ❌ 부분적 | 🔶 Curated | 🔶 GCP only | 🔶 GCP only |

| 엽니다AI API | ❌ 아니오 | ❌ 닫힘 | ❌ Paid only | 🔶 제한됨 |

Mistakes That'll Cost You Hours

- 잡는 것 가장 큰 모델 when a smaller, task-specific one runs faster and cheaper

- Skipping the model card's limitations section before building anything on top of it

- Not pinning model revisions — models update silently and outputs shift without warning

- Using the free Inference API for anything that needs consistent production uptime

- Passing raw text directly into a model without running it through a tokenizer first

AiMojo 추천:

어디에서 가야할 것인가?

포옹하는 얼굴's 무료 과정 at hf.co/learn cover NLP, audio, and deep reinforcement learning in structured paths built specifically for this platform. The best first project: fine-tune a text classifier on a custom dataset, wrap it in Gradio, and deploy it as a Space.

That single build touches models, datasets, fine-tuning, and Spaces in one shot. Once it's live, upload the model and write a proper model card — covering intended use, training data, and limitations.

그's how useful public contributions get made, and it's how you start building a real presence in the 오픈소스 AI 공간.

보너스: $200를 받으세요AI 가입하시면 "마스터리 툴킷"을 무료로 드립니다!

보너스: $200를 받으세요AI 가입하시면 "마스터리 툴킷"을 무료로 드립니다!